- These notes were prepared while studying for technical interviews (e.g., Snap Inc., Krafton, etc.).

- Each entry contains a concise English summary, key math expressions, and excerpts from my original handwritten/typed study notes.

These notes cover the evolution from recurrent to attention-based sequence models, the mechanics of self-attention, the variants used to control its cost, and the adaptation of Transformers to images via patch tokens.

RNN

- sequence modeling architecture for sequential data

- explicitly models temporal dependencies

- processes inputs step by step in time order

- hidden state carries information across time steps

- same weights shared across time

- order-sensitive & variable-length input handling

- hidden state acts as a compressed summary of the past

Backpropagation Through Time (BPTT)

- unfold the RNN over time → apply backpropagation across time steps

- prone to vanishing or exploding gradients

- gradients flow backward through many steps

Limitations

- Vanishing gradients

- long-range dependencies are hard to learn

- Sequential computation

- no parallelism across time steps

- long-context modeling is inefficient

LSTM / GRU

- introduce gating mechanisms → mitigate vanishing gradient problem

- still sequential → scalability issues remain

RNN vs Transformer

- RNN

- strong inductive bias for sequence order

- sequential, memory-based

- Transformer

- attention-based global context

- fully parallelizable

- better long-range dependency modeling

Transformer

- sequence-to-sequence architecture based on attention

- parallel computation over sequence positions

Encoder

- input sequence → encoding (mapping) → contextualized representations (context-aware embeddings)

- Self-attention

- each token attends to all other tokens in the input sequence

- from the same sequence

- captures global context within representations

- each token attends to all other tokens in the input sequence

Decoder

- generate the output sequence autoregressively

- Masked self-attention

- prevent attending to future tokens

- previously generated tokens from the decoder

- causal mask → preventing future information leakage

- Cross-attention

- give attention over encoder outputs

- allowing the decoder to condition on the input

- query: decoder

- key, value: encoder

- give attention over encoder outputs

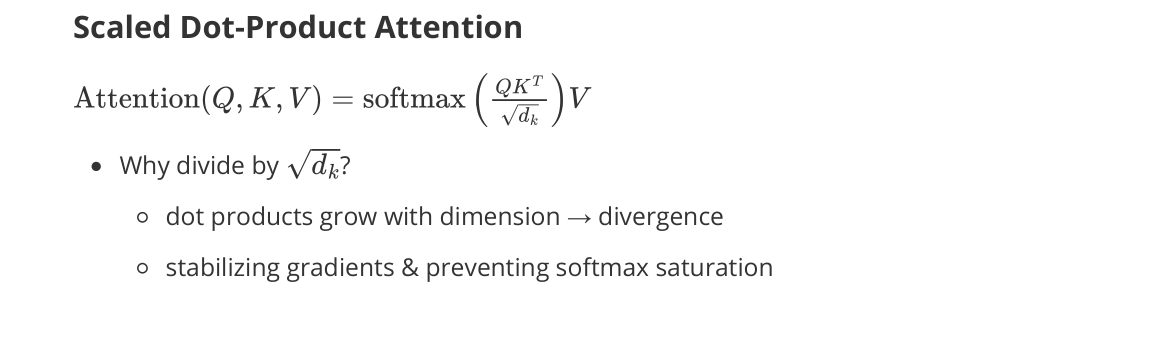

Scaled Dot-Product Attention

Why divide by $\sqrt{d_k}$?

- dot products grow with dimension → divergence

- stabilizing gradients and preventing softmax saturation

Multi-Head Attention

- each head attends to different subspaces → expressiveness ↑

- captures diverse relationships and different representation subspaces simultaneously

Attention Complexity

- computation: $O(n^2 d)$

- for calculating $QK^\top$

- memory: $O(n^2)$ for the attention weights matrix

Long-Context Attention Variants

Linear Attention

- rewriting attention using a kernel feature map $\phi$

- avoid forming $n \times n$ matrix $(QK^\top)$ → complexity ↓ (quadratic → linear)

- at the cost of approximating softmax attention

- asymptotic complexity: $O(n d^2) \approx O(n)$ (when $n \gg d$)

- first computing $\phi(K)^\top V \to O(n d^2)$ ($d \times d$ matrix)

- then calculating $\phi(Q) \cdot (\cdot) \to O(n d^2)$

- drawbacks: approximated softmax attention result

Sliding Window Attention

- each token attends only to a local window of neighboring tokens

- limiting receptive field → reducing quadratic attention cost

- pros: preserves local context well

- cons

- cannot directly capture long-range dependencies

- global information may be lost

Sparse / Flash Attention

- sparse attention (local + global mixtures)

- flash attention: I/O-aware exact attention with tiled computation

Positional Encoding

- Transformers are permutation-invariant (no notion of order) → inject positional information

- added to token embeddings as an additive bias

Sinusoidal Positional Encoding

- deterministic & parameter-free

- encodes absolute position only

- relative distance → not explicitly modeled in attention scores

- low-dimension pair → low frequency

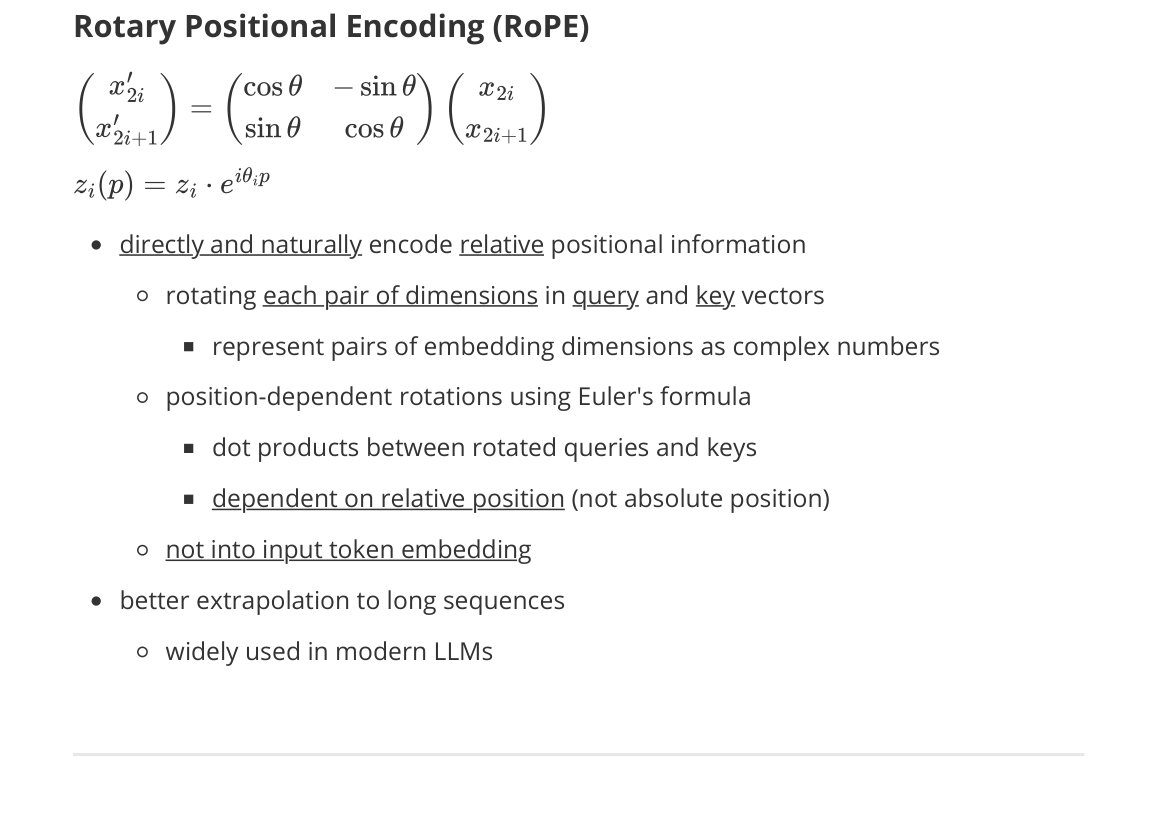

Rotary Positional Encoding (RoPE)

- directly and naturally encodes relative positional information

- rotates each pair of dimensions in query and key vectors

- represents pairs of embedding dimensions as complex numbers

- position-dependent rotations using Euler’s formula

- dot products between rotated queries and keys

- dependent on relative position (not absolute position)

- not added to input token embeddings

- rotates each pair of dimensions in query and key vectors

- better extrapolation to long sequences

- widely used in modern LLMs

Vision Transformer (ViT)

- applies the Transformer encoder to images

- image → sequence of patch tokens

- replaces convolution with self-attention

- global context modeling from the first layer

Key trade-off

- global modeling & scalability

- weaker inductive bias → large data required

- Inductive bias: what a model assumes is likely to be true about the world

- assumptions a model makes about the structure of the data before seeing any data

- Inductive bias: what a model assumes is likely to be true about the world

Patch Embedding

- split image into fixed-size patches

- flatten + linear projection → tokens

Positional Encoding

- inject spatial information into patch embeddings

- required due to permutation-invariant attention

[CLS] Token

![[CLS] Token](/images/archives/notes/09-sequence-models-p43-cls-token.png)

- prepend a learnable classification token to the patch sequence

- participates in self-attention with all patches

- aggregates global image information across layers

- final classification head applied to the [CLS] representation