- These notes were prepared while studying for technical interviews (e.g., Snap Inc., KRAFTON, etc.).

- Each entry contains a concise English summary, key math expressions, and excerpts from my original handwritten/typed study notes.

These notes cover the probabilistic foundations of ML: how to model uncertainty, update beliefs from data, and choose between maximum-likelihood and Bayesian objectives.

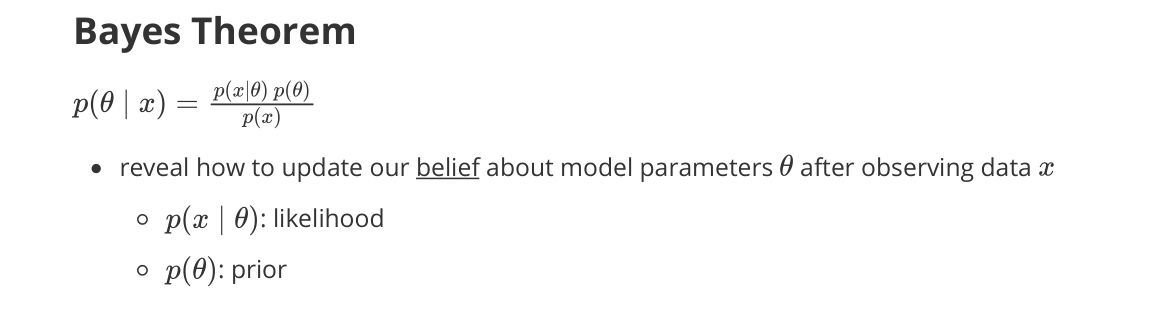

Bayes Theorem

- reveals how to update our belief about model parameters $\theta$ after observing data $x$

- $p(x \mid \theta)$: likelihood

- $p(\theta)$: prior

- $p(\theta \mid x)$: posterior

- $p(x)$: marginal likelihood (evidence)

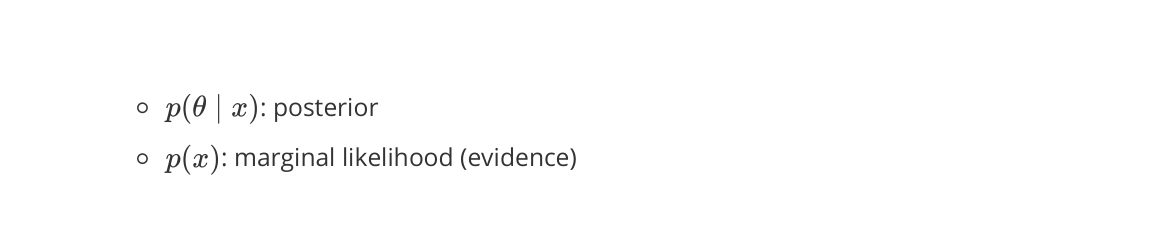

Likelihood

- a function of parameters

- measures how well a set of parameters $\theta$ explains the observed data

- not a probability distribution over the data $x$

- Gaussian model: $x \sim \mathcal{N}(\mu, \sigma^2)$

- likelihood: $p(x \mid \mu, \sigma^2) = \dfrac{1}{\sqrt{2\pi\sigma^2}}\, e^{-(x - \mu)^2 / (2\sigma^2)}$

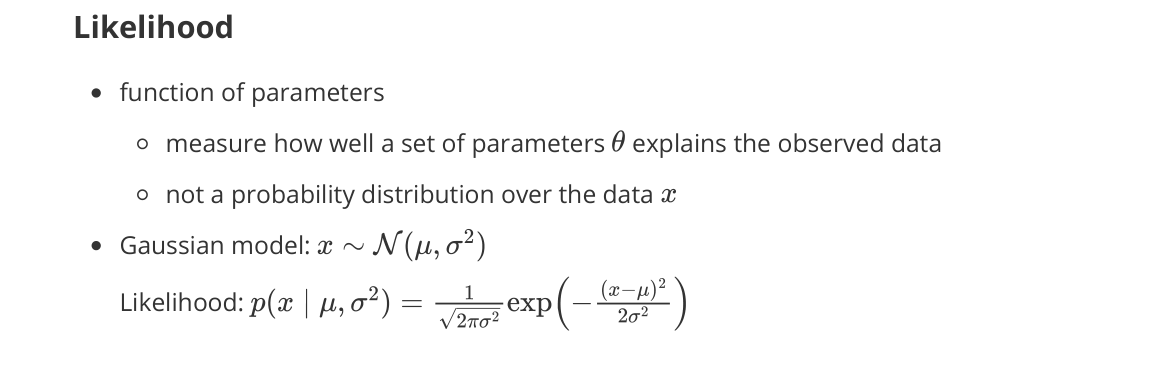

Maximum Likelihood Estimation (MLE)

- choose parameters that maximize the likelihood of the observed data

- without any prior belief

- how to define the objective

- not an optimization method itself (e.g., gradient descent)

- usually maximize log-likelihood: $\arg\max_\theta \log p(x \mid \theta)$

- usually corresponds to minimizing a negative log-likelihood loss

- e.g., MSE, cross-entropy

- usually corresponds to minimizing a negative log-likelihood loss

Maximum A Posteriori (MAP)

- similar to MLE but incorporates a prior belief about parameters $\theta$

- also likely under a prior distribution (regularization)

- if prior is uniform → MAP reduces to MLE

Probability Distributions

- modeling uncertainty of random variables

- mapping outcomes to probabilities

- discrete vs continuous distributions

- PMF (probability mass function) vs PDF (probability density function)

Bernoulli

- single binary trial (e.g., success / failure)

- $X \in {0, 1}$

- success probability $p$

Binomial

- number of successes $k$ in a fixed number of trials $n$

- independent Bernoulli trials

- success probability $p$

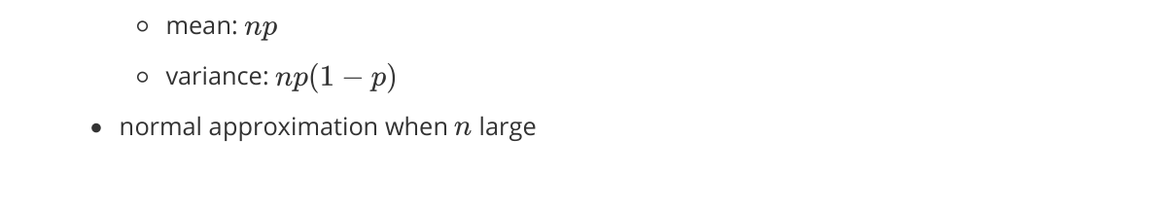

- Statistics

- mean: $np$

- variance: $np(1-p)$

- normal approximation when $n$ large

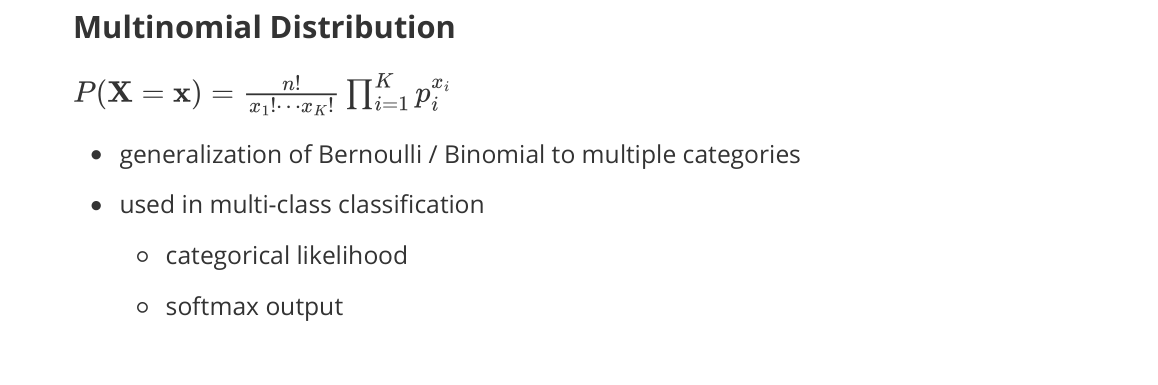

Multinomial

- generalization of Bernoulli / Binomial to multiple categories

- used in multi-class classification

- categorical likelihood

- softmax output

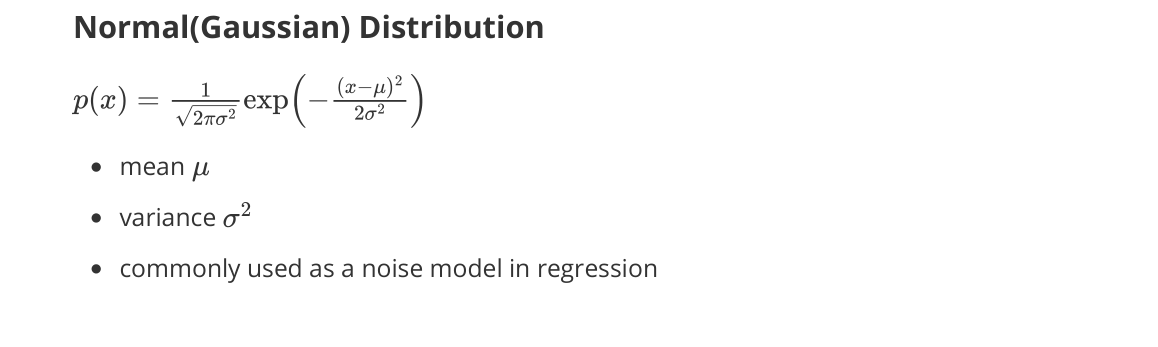

Normal (Gaussian)

- mean $\mu$, variance $\sigma^2$

- commonly used as a noise model in regression

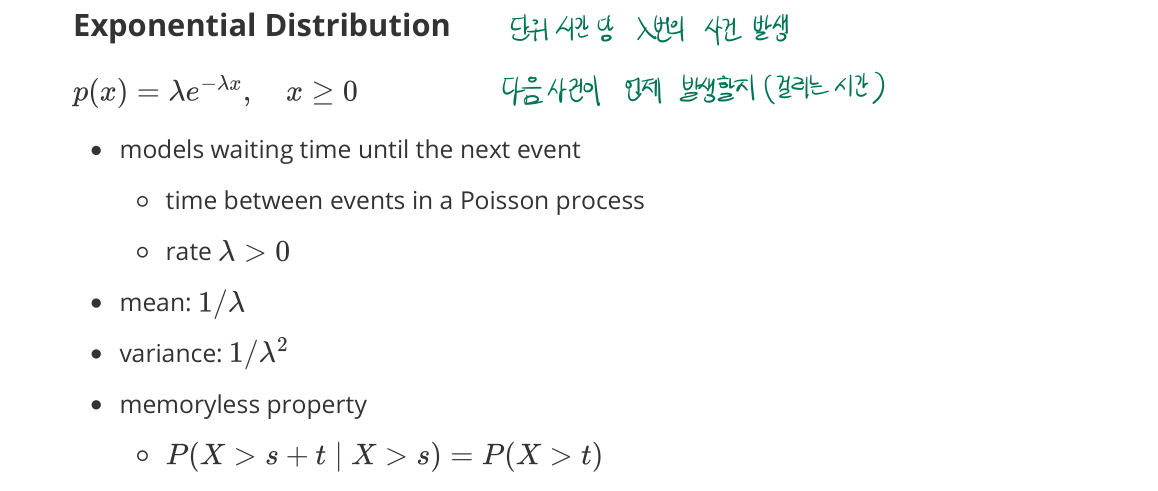

Exponential

- models waiting time until the next event

- time between events in a Poisson process

- rate $\lambda > 0$

- mean: $1/\lambda$

- variance: $1/\lambda^2$

- memoryless property

- $P(X > s + t \mid X > s) = P(X > t)$

- future waiting time independent of the past

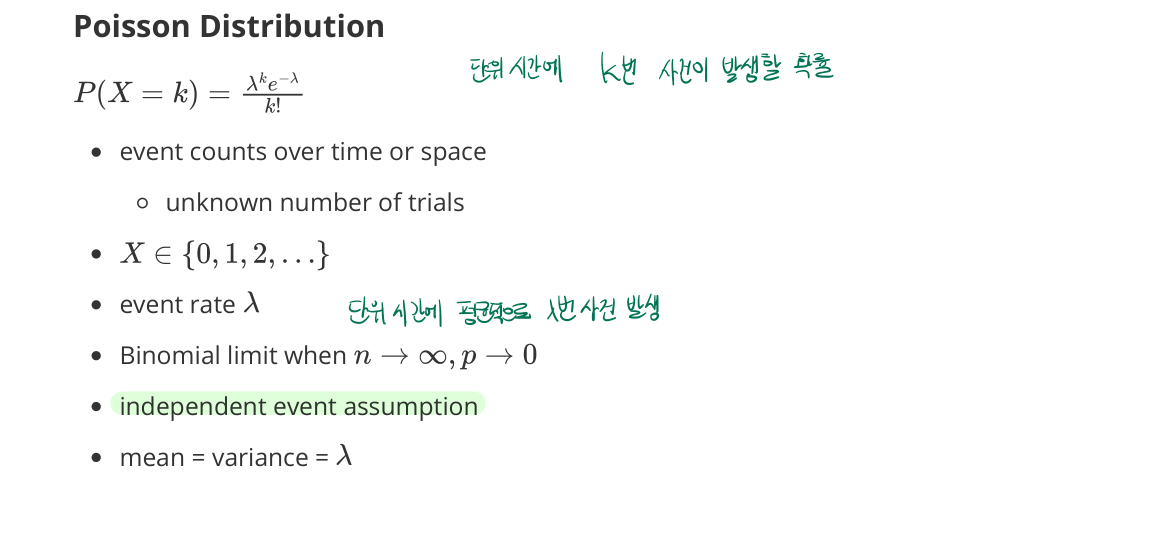

Poisson

- event counts over time or space

- unknown number of trials

- $X \in {0, 1, 2, \ldots}$

- event rate $\lambda$

- Binomial limit when $n \to \infty,\, np = \lambda$

- independent event assumption

- mean = variance = $\lambda$

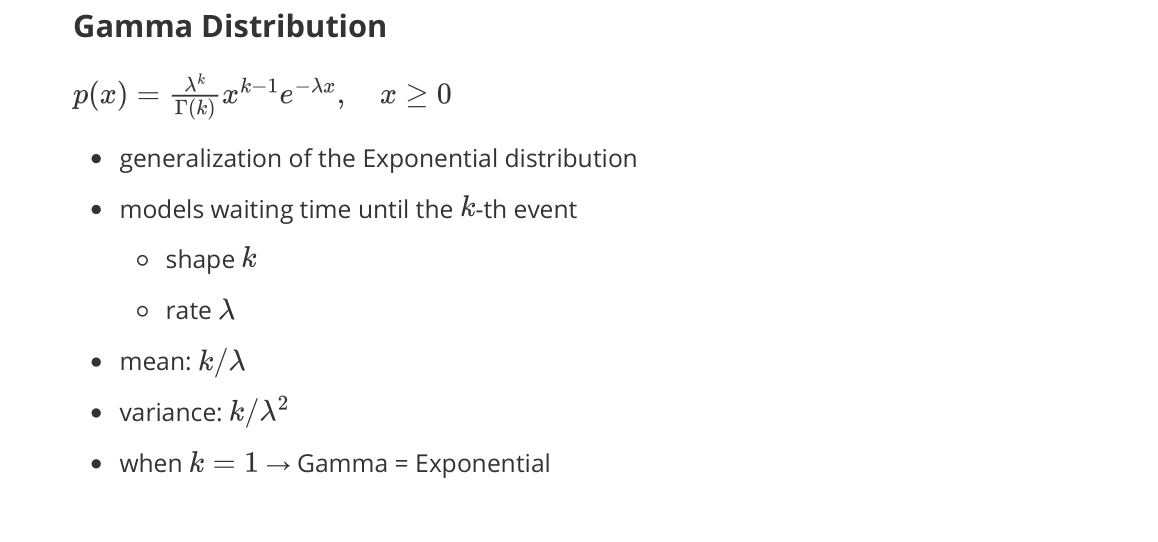

Gamma

- generalization of the Exponential distribution

- models waiting time until the $k$-th event

- shape $k$

- rate $\lambda$

- mean: $k / \lambda$

- variance: $k / \lambda^2$

- when $k = 1$ → Gamma = Exponential

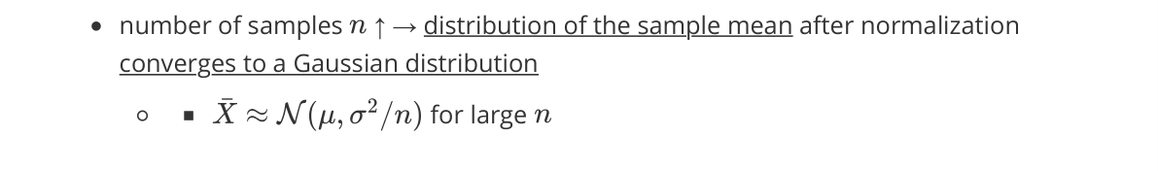

Central Limit Theorem (CLT)

- as the number of samples $n$ increases

- the distribution of the sample mean (after normalization) converges to a Gaussian distribution